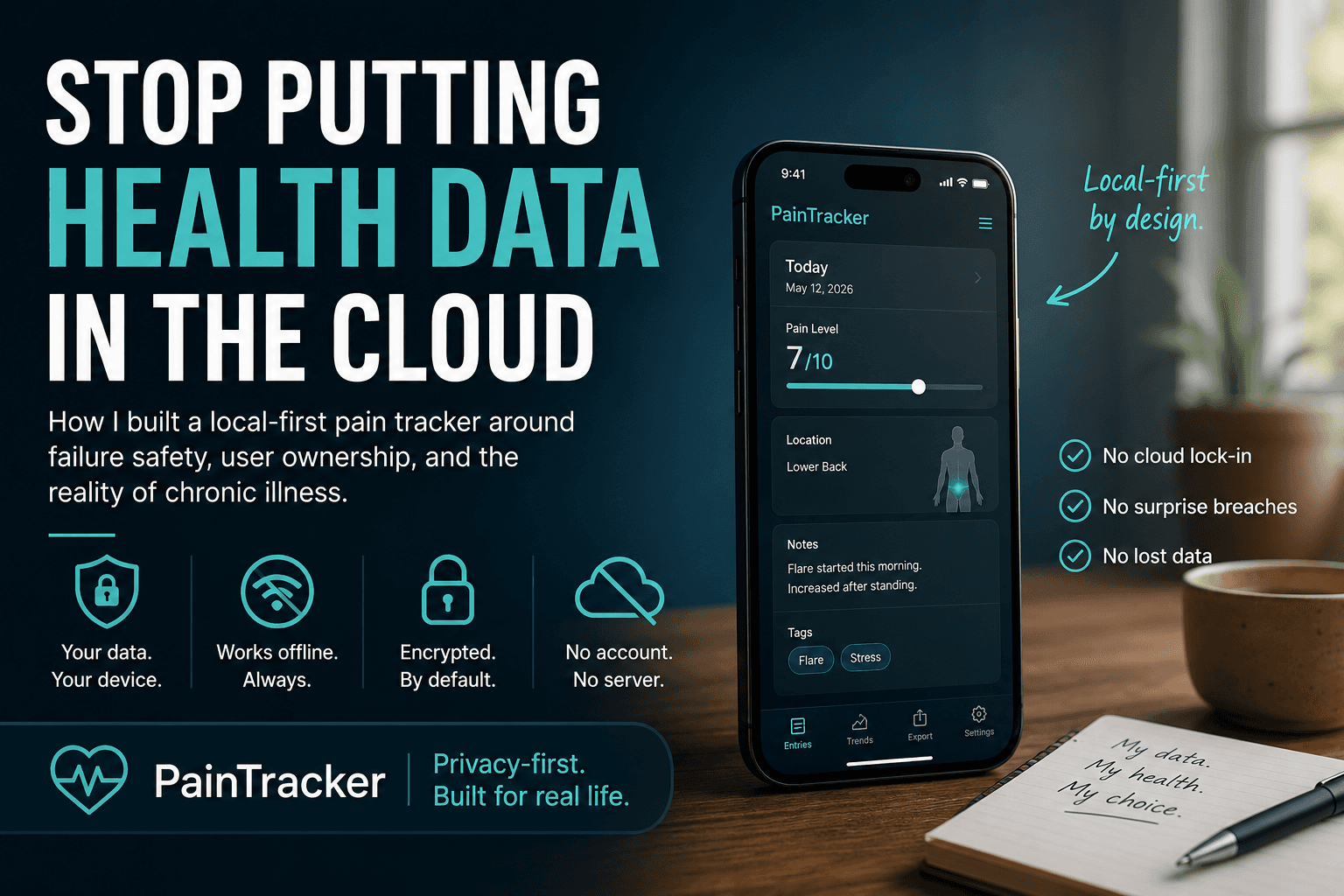

Stop Putting Health Data in the Cloud by Default

How I built a local first pain tracker around failure safety, user ownership, and the reality of chronic illness.

Most health apps start with the wrong assumption.

They assume the user is stable.

Stable enough to create an account. Stable enough to manage passwords. Stable enough to understand privacy settings. Stable enough to log consistently. Stable enough to recover when the app fails.

That is not how chronic pain works.

When someone is in a flare, software has one job before every other feature: do not make the situation worse.

I built PainTracker because I could not find an app that understood this. Most health trackers treat failure as an edge case. They design for the happy path — the user who opens the app daily, logs everything, has a stable internet connection, and trusts that a server somewhere is handling their most sensitive data responsibly.

That is not who needs a pain tracker most

The problem with cloud-by-default health apps

When a health app stores your data on a server by default, it makes several decisions on your behalf.

It decides that server uptime matters more than local availability. It decides that convenience of sync is worth the risk of a breach. It decides that your most vulnerable data belongs in an account. Pain levels. Medication logs. Mood entries. Injury records. All tied to an email address that could be phished, leaked, or subpoenaed.

None of these are decisions users are asked to make. They are made by the architecture.

For most apps, that is a defensible tradeoff. For health data belonging to people in crisis, it is not.

The person logging a flare at 2am does not want to be told their session expired. The person building a WorkSafeBC claim does not want their evidence living on a startup's server that may not exist in three years. The person with brain fog does not need a password reset flow standing between them and their own records.

What local first actually means

Local first does not mean no sync ever. It means the data exists on your device first, and everything else is opt-in.

In PainTracker, every entry is stored in IndexedDB. The app is a PWA, which means it installs to your home screen, loads from cache when offline, and runs entirely in the browser. There is no required account. There is no default database on my server holding your health records.

This is possible because modern browsers are more powerful than most developers treat them.

IndexedDB gives you a structured, queryable, persistent database that lives inside the browser. Web Crypto gives you real encryption — AES-GCM, PBKDF2, the same primitives used in production security systems. Service Workers give you offline routing so the app behaves consistently whether the user has a connection or not.

The architecture is not a workaround. It is a deliberate choice about where trust lives.

When your data is encrypted on your device, I cannot read it. If my server gets breached, local health entries are not sitting there waiting to be stolen. If you stop using the app, your data does not remain on someone else's infrastructure indefinitely.

What failure safety actually requires

The person this was built for is not calmly sitting at a desk comparing dashboards. They may be lying in bed at 2am, trying to record a flare before the memory dissolves. That is the moment the architecture has to respect.

Building for failure safety is not about adding error messages. It is about making sure every failure mode leaves the user in a state that does not hurt them.

For a chronic pain tracker, that means:

If the network drops, the app still works. No silent failures, no lost entries. If recovery depends on a password, the app has to explain the tradeoff clearly before the user is locked out, not after. If the browser storage fills up, the app warns them before data is at risk, not after. If the user needs to export their records for a doctor or insurer, they can do that without my involvement. If the app stops being maintained, their data is still accessible and portable.

None of these are edge cases. For someone managing chronic illness, every single one of these scenarios is likely to happen eventually.

An app that fails in any of these ways is not just inconvenient. It is actively harmful to someone who depends on it during a difficult period.

Why this is a developer problem, not just a product problem

The default stack for most apps — user account, server database, REST API — is not wrong. It is just the wrong default for this category of user.

Developers make architectural choices early and they compound. When you reach for Firebase on day one, you have committed to the user's data living on Google's infrastructure. When you require an account before showing any value, you have filtered out the users with the highest friction tolerance for authentication.

Local first requires you to think about trust boundaries before you write any feature code. It asks you to treat the browser as a capable runtime, not just a rendering layer. It asks you to consider what happens when your backend is unavailable, deprecated, or compromised.

These are harder questions. They are also the right questions for any software that handles vulnerable people's data.

PainTracker is live at https://www.paintracker.ca

It is open source, offline first, and free to use without an account.

If you build health software, I want you to question your default stack.

If you live with chronic pain, I want to know what current trackers get wrong.

If you care about privacy, I want feedback on the local first architecture.

The goal is simple: health software should not make vulnerable people more vulnerable.